At the Playing Lean Facilitator Club, we had the pleasure of having Ash Maurya with us for an AMA. Ash is just about to release his new book called Scaling Lean. We asked him about the book project and many other things.

I’m really curious about your new book, and especially your own process that led up to writing it. 6 years passed between Running Lean and Scaling Lean. 6 years is a long time in our industry, what are the main overall changes you have seen since then? - @simenfur

Yes, I know… Time flies and I realized that I waited too long between books when I sat down to finally write Scaling Lean. I was struggling with squeezing 3 books into one :)

That said, a lot of the topics I address where raised in the many 1:1 conversations I had with people practicing Lean. While Running Lean provided a high-level roadmap for starting, people were struggling to stay lean, post mvp and especially as the team size grew. Those were the problems I was tackling in my workshops. Using the model above,

- once I understand the right problems,

- I set out to formulate possible solutions,

- which I test at small scale (in this case with 20-30 ppl at a time)

- That’s where I iterate until there’s repeatabilty… lots of ideas die at this stage too :)

- Those that don’t go through progressive scaling up… in this case being packaged into a book and tools

I guess when the book is published, the room for iterative improvement is limited?

Yes and no… A product is never finished, only released from time to time… One has to find other avenues to continue the story… maybe another edition, more content, etc. — in the case of a book at least.

Is the book accompanied with some sort of canvas or "hands on" templates? - Tore Rasmussen

Yes. In Scaling Lean, I am introducing 4 new tools to extend Lean Canvas.

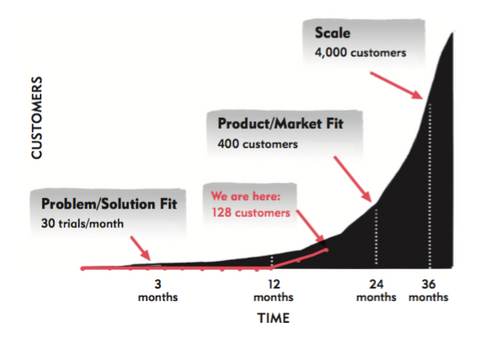

- The Traction Model: Talks to business model sizing and roadmap of the business

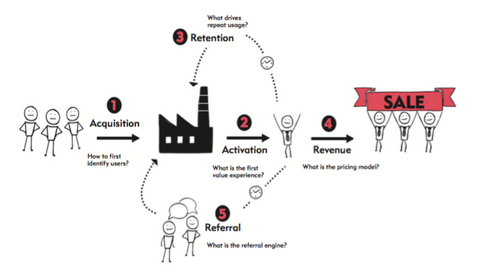

- The Customer Factory blueprint: The 5 AARRR metrics drawn differently helps to communicate progress

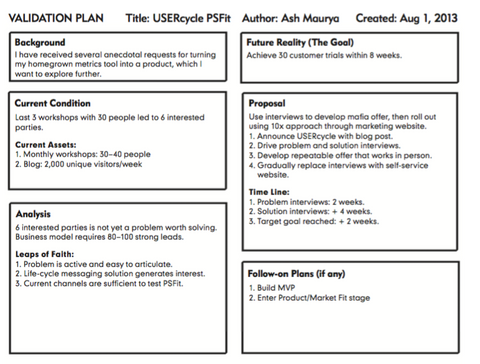

- 1-page Validation Plan: Collects ideas / strategies to test

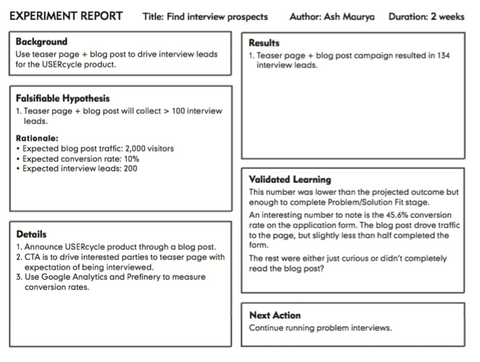

- 1-page Experiment Report: Provides check-list for designing good experiments.

Could you tell us a little bit more about these tools? Especially the "1-page Validation Plan" and the “Traction Model”- @christinakseime

Here’s what a 1-page validation plan for a new idea might look like:

And a sample experiment:

The job of the traction model is to allow us to build a customer adoption model that we then test against:

This will be added to the leanstack software? - Tore Rasmussen

It’s already in there — remember I don’t do anything that isn’t repurposed at least half a dozen times :) Now getting to these versions, took two dozen iterations and lots of testing with live products and entrepreneurs.

Can you share with is the diagrams for the "The Customer Factory blueprint: The 5 AARRR metrics drawn differently helps to communicate progress" as well?

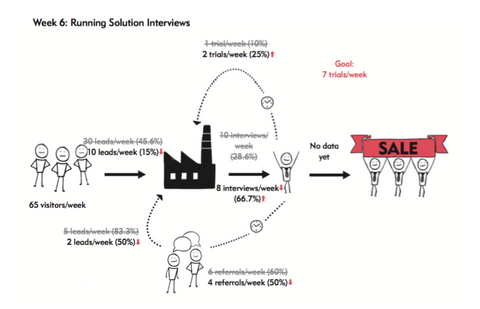

Here’s the CFB:

This is what it might look like with data:

Ash, where do you see points to add the metrics part from your new book into the board game? - @DillSven

In the book I make the point that Build/Measure/Learn misses a crucial planning step which we find in the original lean thinking with the PDCA loop. I believe before getting outside the building to run experiments, it’s critical to spend some time modeling the business — using tools like Lean Canvas and a traction model — which ties into metrics.

As Playing Lean facilitators, what do you think is the most important lesson we can help our players understand in workshops? - @simenfur

I think you guys already do a great job of reinforcing several fundamentals: Running experiments to uncover what customers want being the biggest one.

In practice though, any steps towards getting players to model what they expect to happen would be a great value add in my opinion.

I’m cognizant of the fact that you can’t teach everything all at once… so this may be better done maybe as a post-activation step. It’s easier to get people to get the biggest a-ha by playing a “safe” game before challenging their own ideas.

Assume you work in a company that is "old school" (ie silos, plan upfront, control/measure resources). They use Agile methods to some extent. They (the developers) have played "Playing Lean" and are very curious about Lean Startup. How would you recommend they approach this? I'm of course thinking of you now as a "behavioral psychologist" ;) - @christinakseime

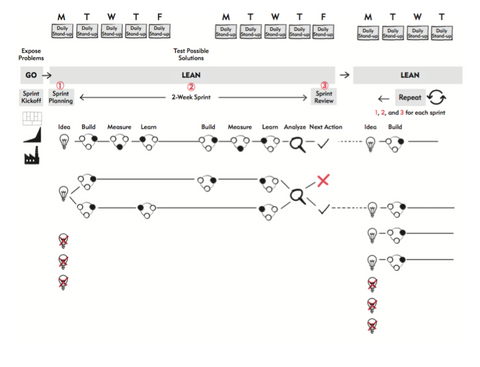

If a company is already agile aware… I simply get them to try running few LEAN Sprints (which is another chapter in the next book)… Methodologies like agile, get teams to build iteratively. The LEAN Sprint gets them to build/measure/learn iteratively. While agile is about build velocity, lean sprints are about traction velocity.

High-level overview of a typical LEAN Sprint:

Have you worked with researchers (like PhD doctoral students), and how do your ideas translate / what needs different language or different approach? - @KatiaVanBelle

I’ve worked with many researchers in the biotech, medtech, and cleantech fields. The language already resonates quite strongly if we simply get them to apply the same scientific rigor to the business model that they already do to their research.

Could you say that modelling the business equals having a clear vision behind your experiments? - @simenfur

I spent quite some time studying scientists and found that they always start with a problem, then build a model, which they validate/invalidate through experiments. Simply jumping to step 3 w/o the steps 1 and 2 doesn’t usually lead to breakthrough.

Can you tell a bit more about "scientific rigor applied to the business model"? - @KatiaVanBelle

I break the running lean process into 3 meta-principles:

- Document your Plan A

- Identify what’s riskiest in the plan

- Systematically test through experiments

Here’s the parallel from the Scientific Method:

- Build a model

- Compute consequences / make predictions

- Test against experiment

To quote Richard Feynman: If the model doesn’t agree with experiment, it is wrong.

The main difference is that experimentation in a business isn’t just limited to product (solution). Everything you put in front of customers — your solution, marketing, pricing, pitches, etc. can and should be tested with that level of rigor.

I hear these PhD researchers often tell that they have so many other tasks to do (assisting, teaching…), which causes them to lose track of the progress of their research. Any ideas on how metrics might help them keep the overview? - @KatiaVanBelle

I take a strong position in Scaling Lean that even the "validated learning” metric in the Lean Startup is not always enough UNLESS we can turn that learning into business results or traction. Traction is a measure of how a business model delivers and captures value from their customers. And at the end of the day, that’s what I use to measure, at a macro level, whether a team is making progress or not. I write, blog, teach as well — but they all have a purpose: to test new ideas which eventually show up in other products I build.

The Lean Canvas, Running Lean, and the next book, Scaling Lean where all created this way.

Ash, can you say something regarding the problems with vanity metrics? - @opfjellstad

Where do we start? :) I think it’s not so much what we measure but how that turns any metric from actionable to vanity. Revenue per month is a good metric, cumulative revenue is really bad.

The gold standard is really measuring everything in batches (or cohorts) but that can be really hard to do with everything. That’s why I suggest people just start with the 5 AARRR metrics - Acquisition, Activation, Retention, Referral, and Revenue.

Have you seen the industry as a whole do innovation better since 2010, or are we generally just as clueless as before? - @simenfur

I’m an optimist and believe that yes things have gotten better. I also carefully select who I work with so there’s some built in selection bias in there for sure… More seriously though, the biggest obstacle to overcome with anything new is behavior change. Whenever, we can get a team to slow down and test fundamentals, I think innovation improves — even if that means killing an idea in 6 days and redeploying resources to other ideas.

I sometimes feel my job has shifted from technologist to behavioral psychologist — how to get people to do what they want and make it align with what they need.

Is there something you wish you could go back and rewrite in Running Lean with the experience you have today?

I am thinking of creating an updated edition to Running Lean… I think there could be more clarity drawn between MVP and an offer — which I still see lots of people getting mixed up.

I’m also not as big an advocate anymore of getting customer’s to rank problems… It’s okay but too subjective. It’s far more effective to rank them yourself based on what they told you they did or what they do after the interview…

How do you then get rid of your own biases, then?

By listening more actively and paying attention to cues from what people did in the past, the words they used, and whether they’ll want to talk to you in the future and refer you to others.

After a batch of interviews, I recommend drawing a workflow diagram of how they get the job done today. After enough interviews, it’s fairly easy to draw. That’s where I layer in actual problem ranking, emotion, success criteria, and opportunities. It’s a page taken from Design Thinking: Customer journey mapping.

Regarding the promotion of your new book; "Scaling Lean" I see that you are giving Skype-meetings, how many of those events to you think you will give and how many books do you think you will sell? - Tore Rasmussen

I am running 4-5 sessions a month with an average of 40 people in the room… This is one strategy among many that we are implementing towards out goal of 10K pre-orders.

By the way, I’m turning that into an open case-study which I’m posting here:

https://blog.leanstack.com/an-authors-journey-to-100-000-copies-5cc01380173b

We’ll be sharing Lean Canvases, Validation Plans, and Experiments Reports along the way — we dogfood everything ;)

Happy dogfooding, Ash! And thank you so much for the visit at Playing Lean Facilitator Club!

If you want to become a member of the Playing Lean Facilitator Club, check out one of our training options - a live Playing Lean Facilitator training or a lighter version, the online Game Master training.

1 comment

Great post, would love to see more like these!

on another note:

@simen, is there a chance a software product could be made from the board game?